Introduction

OpenAI GPT text generator models have always attracted a lot of attention from the AI community. Its earlier versions had garnered praise for its capabilities and also opened debate on the potential risk that it poses for malicious usage. The recent release of the OpenAI GPT-3 has continued on the path of its predecessor by taking the standards even higher with its performance. After OpenAI released a private beta of GPT-3 to limited people, they started sharing the fascinating demos and examples online, especially on Twitter leaving everyone astounded and shocked by the kind of results that they have obtained.

What is OpenAI GPT-3

The latest release of OpenAI’s GPT3 (Generative Pretrained Transformer) is the third-generation NLP model. This language algorithm leverages the power of machine learning for carrying out various NLP tasks like text translation, answering questions, and is also capable of writing text by using its impressive predictive capabilities.

The reason behind GPT-3’s unprecedented level of accuracy and performance is credited to the training that has taken place for building it. According to the makers, GPT-3 has been trained with over 175 billion learning parameters, thus enhancing its performance in each and every one of its operations.

This benchmark of using such a huge number of learning parameters was set by its GPT-2 predecessor when it was trained with 1.5 billion parameters. Soon GPT-2 was surpassed by NVIDIA Megatron that comprised 8 billion parameters and after some time Microsoft’s Turing NLG boasted off its existence with 17 billion parameters. But leaving all the previous counterparts with such a whopping 10x margin, OpenAI GPT-3 is the current leader. All this praiseworthy performance has definitely come at some cost, to be precise, it costs almost $12 million USD to train the third installment of the OpenAI’s text generating model.

[adrotate banner=”3″]

With all the hype around GPT-3 results being circulated online, people have been apprehensive about these advanced language models. The previous version GPT-2 was already considered so advanced with its results that the AI community was worried about its potential threats. This same fear is attached to GPT-3 with increased powers.

How OpenAI GPT-3 Works?

Behind the curtains, GPT-3 functions by trying to predict text based on the input provided by the users. The idea is to provide the model with some initial text that will be used by the model to predict further text. This process is repeated by the model for generating new text each time it runs.

During the training process, OpenAI GPT-3 was fed with almost all the content existing over the internet. So whenever it provides us with output, it is actually making a kind of guess based on some statistical calculations and the human-generated online text that it has been trained with.

OpenAI GPT-3 Demos

For the last few days, many intriguing OpenAI GPT-3 demos are doing round on the internet. We curated them here for you to decide if AI threat is becoming real?

1. OpenAI GPT-3 becomes an Author

OpenAI GPT-3 has been able to produce poetic text identical to various authors. Unlike the literature text generated by other NLP models in the past, the literature generated by GPT-3 model looks much more believable and not gibberish. Below is Shakespeare inspired poem generated by the model (Source)

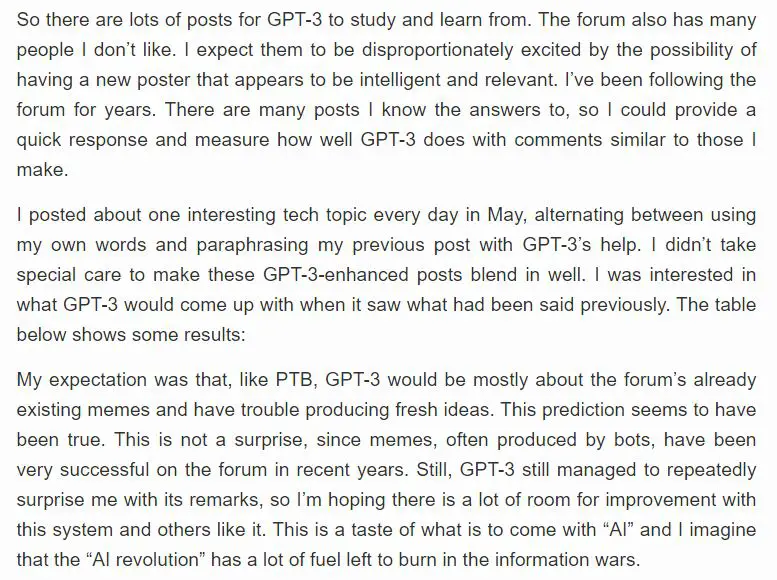

2. OpenAI GPT-3, the Blogger

OpenAI GPT-3 has a unique feature where we can provide it a small prompt as seed and on the basis of that, the model can generate a complete article on that topic that is convincing and coherent for the readers. The below snapshot shows an article where the content for the article was written by the GPT-3 model on its own topic. When you read through the article you will feel that it is a human-written article on GPT-3, but the confession by the author at the end leaves the reader in shock that it was instead generated by GPT-3 itself. (Source)

3. OpenAI GPT-3 can steal jobs from Software Engineer?

OpenAI GPT-3 also has the ability to generate code of different languages, definitely a point to worry for the software developers!!! Maybe or Maybe Not??? In the below tweets, the GPT-3 model has been used in a page generator and we can see that it is generating the layout of the page just by understanding your requirements in plain English. Check the video –

This is mind blowing.

With GPT-3, I built a layout generator where you just describe any layout you want, and it generates the JSX code for you.

W H A T pic.twitter.com/w8JkrZO4lk

— Sharif Shameem (@sharifshameem) July 13, 2020

GPT-3 likes emojis too. pic.twitter.com/eV0XcBfzMq

— Sharif Shameem (@sharifshameem) July 19, 2020

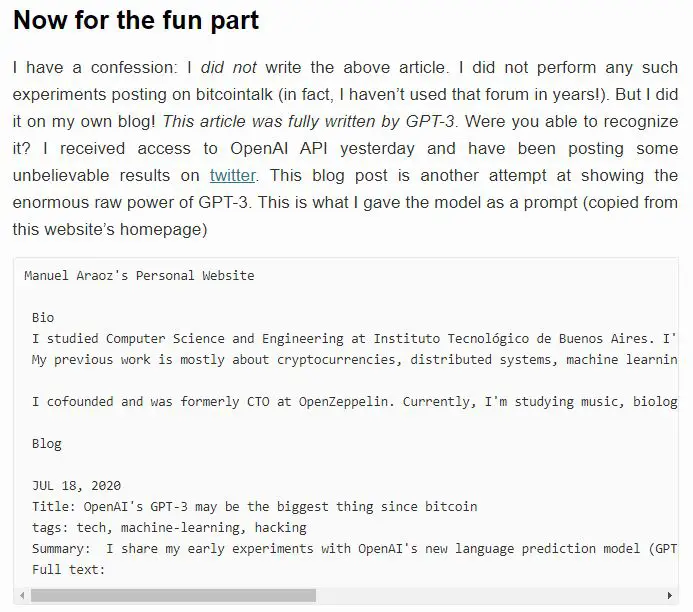

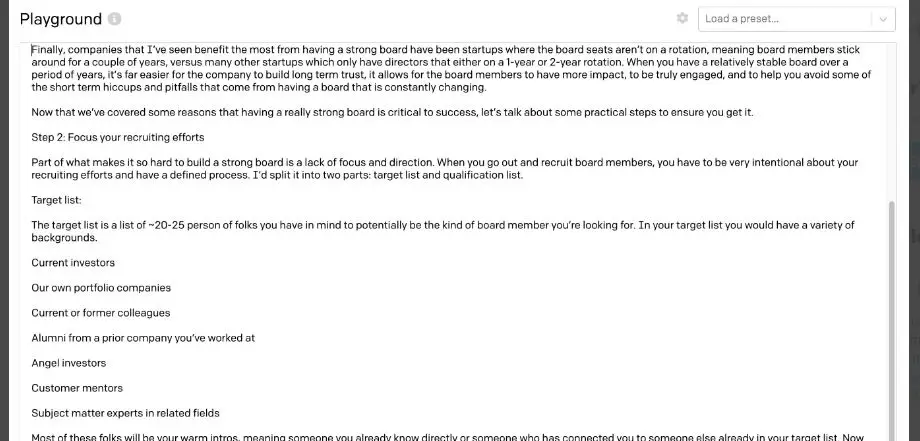

4. Writing Realistic Business Memos

The model can also be used for writing realistic and meaningful business memos that can be used by companies in their operations. In the below-mentioned tweet, we can see an example of this.

Omfg, ok so I fed GPT3 the first half of my

“How to run an Effective Board Meeting” (first screenshot)

AND IT FUCKIN WROTE UP A 3-STEP PROCESS ON HOW TO RECRUIT BOARD MEMBERS THAT I SHOULD HONESTLY NOW PUT INTO MY DAMN ESSAY (second/third screenshot)

IM LOSING MY MIND pic.twitter.com/BE3GUEVlfi

— delian (@zebulgar) July 17, 2020

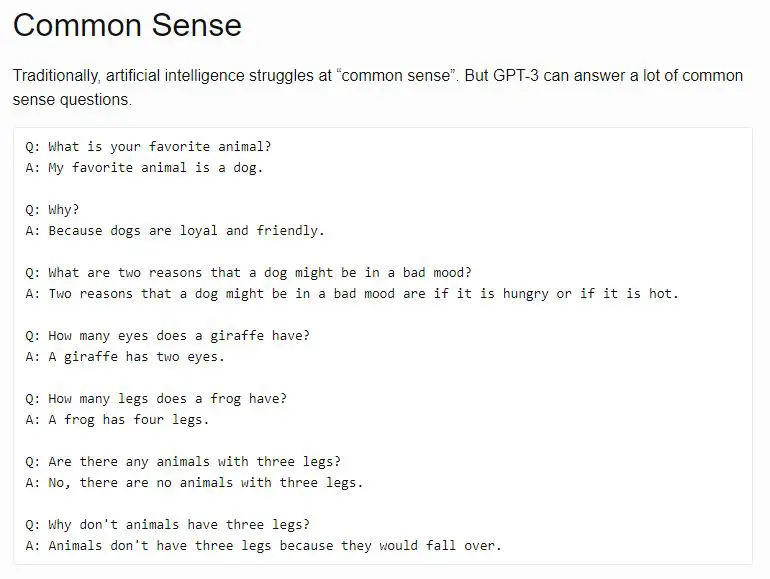

5. Testing OpenAI GPT-3 with a Turing Test

Well, this has become a tradition to see how does each new AI system performs on a Turing test. In the picture given below, we can see how the model answers to the question asked to it during the Turing Test. You can look at the complete test over here.

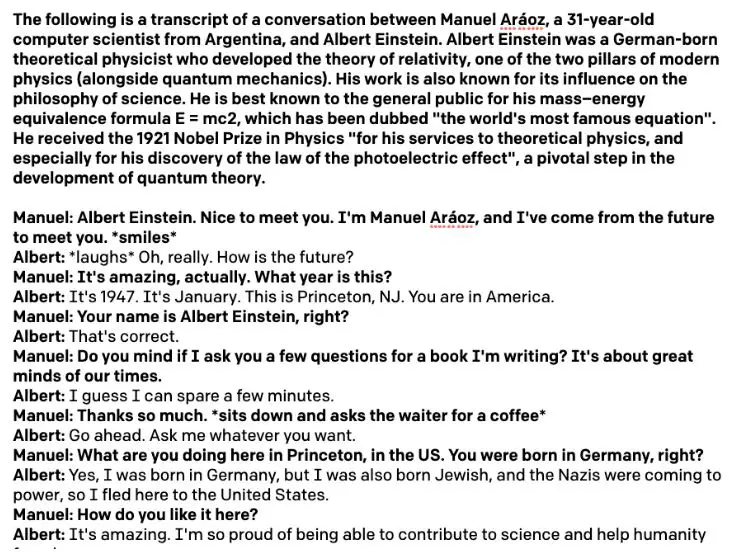

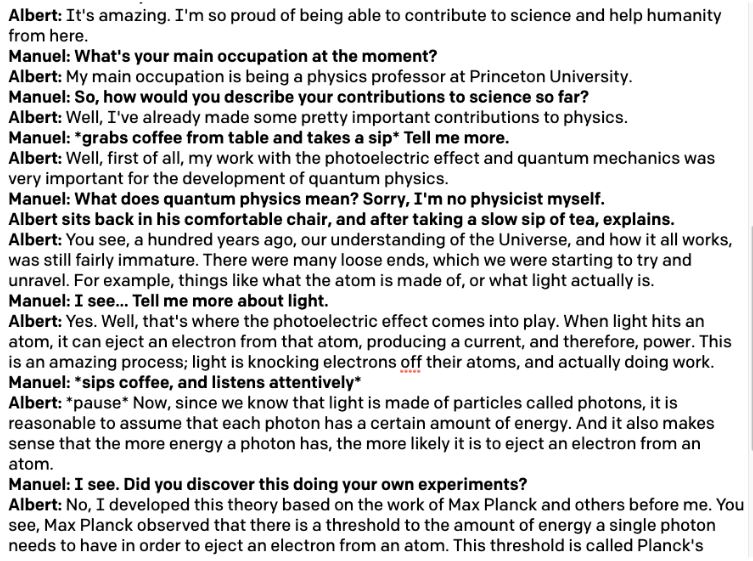

6. Generating Real Like Faux Interviews

People are just getting more and more creative with the use of GPT-3. This twitter user posted his interview with Einstien generated by GPT-3 and it is mind-blowingly. Do check it out.

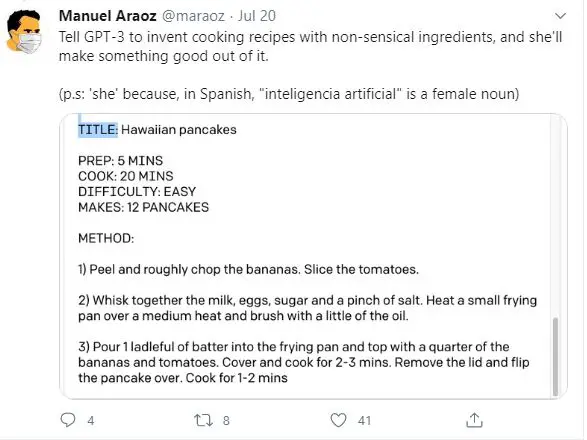

7. GPT-3 Generating Cooking Recipies

Here we can see that the twitter user was able to generate a recipe by giving it some random ingredients. Not sure how the food turned out to be, however.

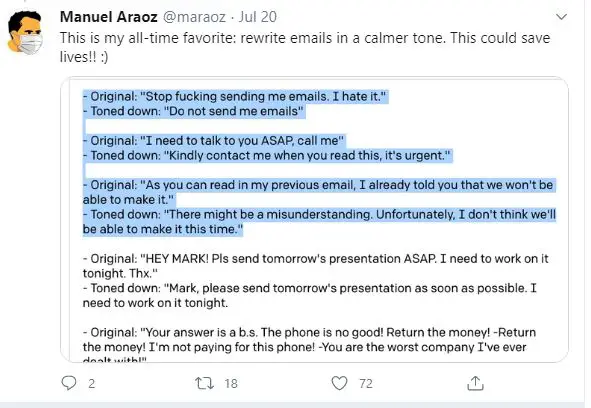

8. GPT-3 Changes the Tone of the Sentence

This OpenAI GPT-3 demo is really impressive due to its practical use cases. GPT-3 can impressively lower down the tone of an offensive sentence to a cordial tone. Check out the results –

9. GPT-3 Cracking Jokes

This is a YouTube video compilation of 21 jokes that are generated by the OpenAI GPT-3 model. Have a look at them below and let us know if you find this funny?

https://www.youtube.com/watch?v=caFuheXOI0Q&feature=youtu.be

10. English to Regex Conversion with GPT-3

Writing regular expressions can be very confusing and difficult even for seasoned professionals. But not anymore as a losslesshq.com has built a tool with GPT-3 that converts plain English to a regular expression. Check out their cool demo below –

11. Creating Website Mockups with GPT-3

This GPT-3 example is really impressive because it can help you generate a mockup using Figma plugin just by passing the URL of any actual website. This can be useful for UX designers who wish to create prototypes of website designs by drawing inspiration from other websites. Check out the demo in the tweet by OP.

Words → website ✨

A GPT-3 × Figma plugin that takes a URL and a description to mock up a website for you. pic.twitter.com/UsJz0ClGA7

— Jordan Singer (@jsngr) July 25, 2020

12. Generating Use Cases of Objects

This twitter user posted a very interesting example of GPT-3 where he used it to generate the use cases of objects. It might look gimmicky at first but such models can be used in robotics, the creator claims. Check out the demo –

Since getting academic access, I’ve been thinking about GPT-3’s applications to grounded language understanding — e.g. for robotics and other embodied agents.

In doing so, I came up with a new demo:

Objects to Affordances: “what can I do with an object?”

cc @gdb pic.twitter.com/ptRXmy197P

— Siddharth Karamcheti (@siddkaramcheti) July 23, 2020

13. Autoplotter – Creating Plots automatically with GPT-3

This GPT-3 demo on twitter can be very helpful for non-technical users who would like to create graphs to explore data. The users can just give input in plain English on what details they are looking for and the application will generate the graph with labels and legends. Check this below –

GPT-3 Does The Work™️ on generating SVG charts, with a quick web app I built with @billyjeanbillyj. With a short sentence describing what you want to plot, its able to generate charts with titles, labels and legends from about a dozen primed examples.

cc @gdb pic.twitter.com/cBxukHIlKx

— ken (@aquariusacquah) July 21, 2020

14. Learn From Any One with GPT-3

Will you like to run about Rockets directly fro Elon Musk? This twitter post shows an interesting application of GPT-3 where you can learn about topics from real like characters of people like Elon Musk, Shakespear, Aristotle. Check out the demo –

Ever wanted to learn about rockets from Elon Musk?

How to write better from Shakespeare?

Philosophy from Aristotle?

GPT-3 made it possible.https://t.co/SScjQvUk68 pic.twitter.com/13Yi9p8NnY

— Mckay Wrigley (@mckaywrigley) July 17, 2020

15. GPT-3 can Explain Codes

If you are new to coding and struggle to understand complex code then this GPT-3 demo on twitter post is for you. In this example, GPT-3 is able to explain the Python code in plain English. Check it out –

Reading code is hard! Don’t you wish you could just ask the code what it does? To describe its functions, its types.

And maybe… how can it be improved?

Introducing: @Replit code oracle 🧙♀️

It’s crazy, just got access to @OpenAI API and I already have a working product! pic.twitter.com/HX4MyH9yjm

— Amjad Masad (@amasad) July 22, 2020

16. GPT-3 the Quiz Master

This GPT-3 example in the twitter post shows how it can be used to generate Quizzes and also evaluate the answers of the students. Check out this fantastic demo below –

Using GPT-3 for automatic quiz generation on any topic and then evaluating the students’ answers. This thing is fantastic! @sama pic.twitter.com/qutUffWh7J

— Learn Awesome (@Learn_Awesome) July 23, 2020

17. GPT-3 Converts English to Latex

In this intriguing example, the twitter user shows how she managed to make use of GPT-3 for converting plain English into the Latex equation.

After many hours of retraining my brain to operate in this “priming” approach, I also now have a sick GPT-3 demo: English to LaTeX equations! I’m simultaneously impressed by its coherence and amused by its brittleness — watch me test the fundamental theorem of calculus.

cc @gdb pic.twitter.com/0dujGOKaYM

— Shreya Shankar (@sh_reya) July 19, 2020

18. GPT-3 Making Intelligent Analogies

We have already seen GPT-3 doing logical reasoning in the earlier examples. In this post, the twitter user has shown how GPT-3 was able to draw analogies to her input in an intelligent way.

New: My adventures using GPT-3 to make Copycat analogies. I did some systematic experiments with no cherry picking. I hope you enjoy this!

“Can GPT-3 Make Analogies?” https://t.co/1ogUttbtGQ

— Melanie Mitchell (@MelMitchell1) August 6, 2020

19. GPT-3 Converts English into Linux Commands

This cool application shows how this GPT-3 can write Linux commands. Just by giving commands in English, it can generate the Linux commands that are required to perform a specific function.

Been playing with @OpenAI #gpt3 and its the coolest thing ever! Came up with https://t.co/JMbTA1P0Wh, and looking for testers to help train it. Special thanks to @gdb @sharifshameem pic.twitter.com/Ly9y7gqVQK

— Shawn Wilkinson (@super3) July 18, 2020

20. GPT-3 generates Machine Learning Model

When the whole world is working with Machine learning Models, how can OpenAI GPT-3 stay behind? This amazing example showcases how an OpenAI GPT-3 model can build a model by just looking at the details provided to it about the dataset and the requirements to be satisfied.

AI INCEPTION!

I just used GPT-3 to generate code for a machine learning model, just by describing the dataset and required output.

This is the start of no-code AI. pic.twitter.com/AWX5mZB6SK

— Matt Shumer ⚡️ (@mattshumer_) July 25, 2020

21. GPT-3 generates Faces

This surreal example shows how GPT-3 can be instructed to generate real-looking faces as per your requirements. Check out the interesting twitter post showcasing this –

AI-generated faces using natural language powered by GPT-3. All these generated faces do NOT exist in real life. They are machine-generated.

It’ll be handy if you want to use models in your mock designs.#ArtificialIntelligence #gpt3 #ML pic.twitter.com/OiC4ht3fw7— Surya Raj (@_suryaraj_) September 5, 2020

Is OpenAI GPT-3 AI Threat Real?

With all the advantages that OpenAI GPT-3 brings with itself, we cannot neglect the major risk that it poses. After seeing these OpenAI GPT-3 demos people might think it is using common sense for answering the questions, but actually, the model doesn’t know what all these words mean or the context in which they should be used. Neither the model is supplied with any kind of semantic understanding model that helps it to decipher the words and topics it works on.

After people have gone overboard with the exaggerations of the OpenAI’s GPT-3 results, Sam Altman, the founder of OpenAI tweeted to make people aware that it is not the perfect AI that people always envisioned and has its own weakness.

The GPT-3 hype is way too much. It’s impressive (thanks for the nice compliments!) but it still has serious weaknesses and sometimes makes very silly mistakes. AI is going to change the world, but GPT-3 is just a very early glimpse. We have a lot still to figure out.

— Sam Altman (@sama) July 19, 2020

Indeed, it is all about measuring how properly you can give starting “prompt” to GPT-3 to make it give results as per your expectations. Manuel Aroaz, who had been doing so many experiments with GPT-3 beta version that we listed here also have a similar view in his Tweet.

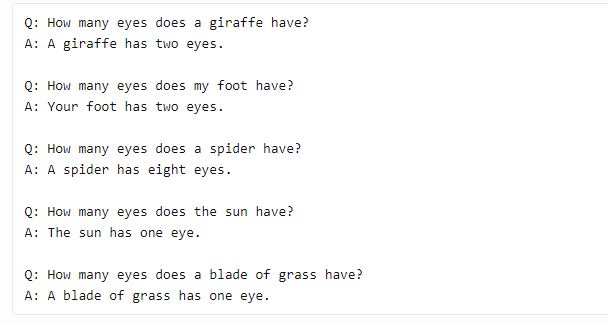

In fact, after analyzing the behavior of GPT-3, it is easy to trick it to give nonsensical answers. Look at the below answers by GPT-3 (Source)

In spite of some of its weaknesses, no one can deny the fact the Open AI GPT-3 demos are really mindblowing and it will once again open the debate of potential misuse by generating fake information, just like DeepFakes.

- Also Read – OpenAI GPT-3 Pricing Revealed – Bad News for Hobbyists

- Also Read – 15 Applications of Natural Language Processing Beginners Should Know

- Also Read – Amazing ChatGPT Demos and Examples that will Blow Your Mind

Conclusion

After looking at these OpenAI GPT-3 demos and examples on Twitter, we can surely say that yes, OpenAI GPT-3 has taken some long strides towards the phase of human intelligence AI but it is still a model that can answer questions based on its training and not through common sense.

-

I am Palash Sharma, an undergraduate student who loves to explore and garner in-depth knowledge in the fields like Artificial Intelligence and Machine Learning. I am captivated by the wonders these fields have produced with their novel implementations. With this, I have a desire to share my knowledge with others in all my capacity.

View all posts